Gargantua Labs · Core systems for AI · est. 2026

Building the core systems AI needs.

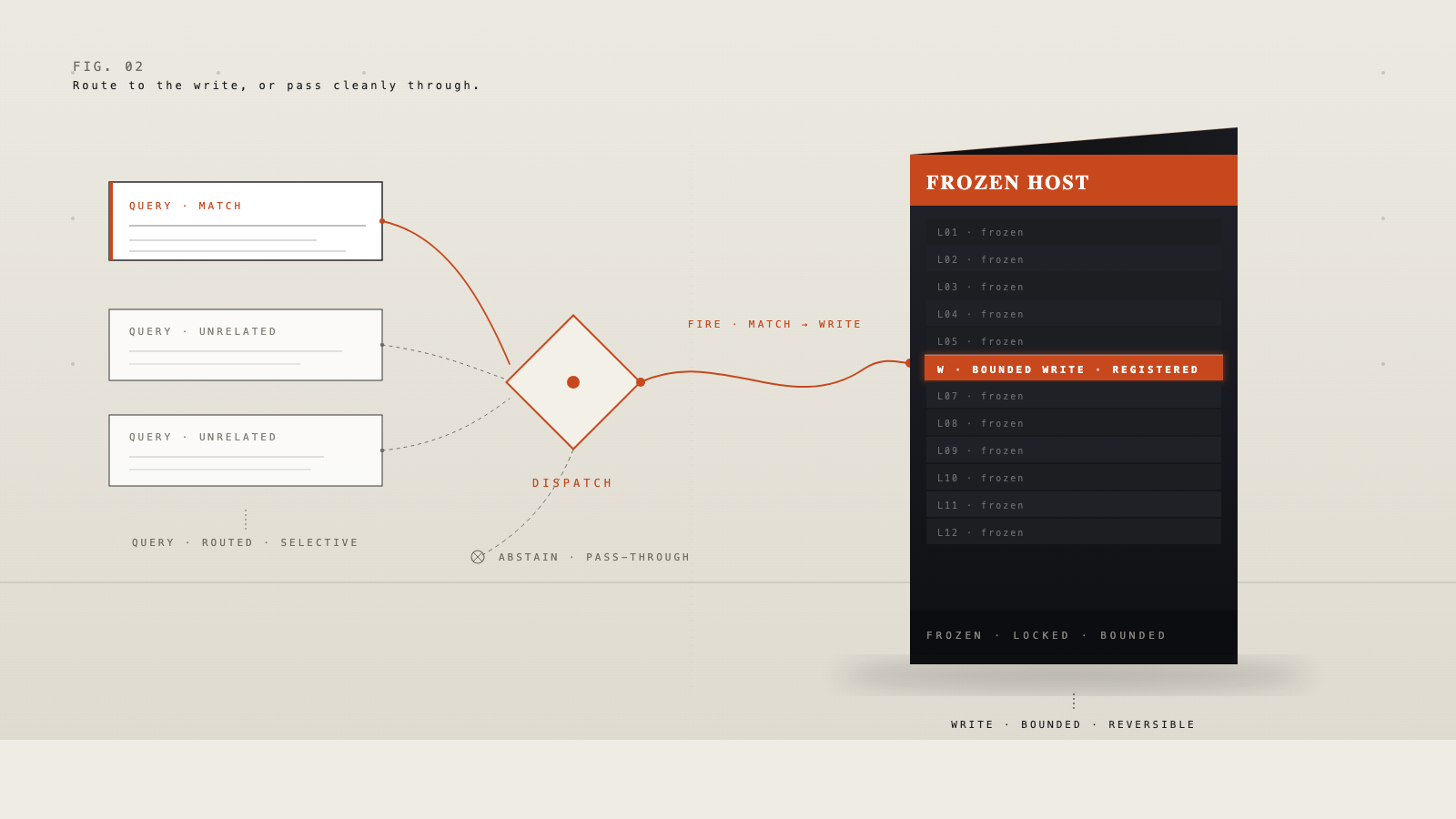

AI is advancing fast, but the systems around it are still incomplete. Gargantua Labs builds foundational technology for memory, adaptation, control, and security so future AI systems can become more capable, reliable, and safe.

1Product in MVP

SeedRound open

2026Founded

CABased in California

§04 — Invest

Back the lab building AI’s missing core systems.

We’re raising a seed round to scale engineering on DeltaWrite, expand integrations with frontier-model partners, and ship the next core systems in the pipeline. Conversation-first. If you back technical founders building durable infrastructure, we’d like to talk.